DiveFace

DiveFace contains annotations equally distributed among six classes related to gender and ethnicity (male, female and three ethnic groups). Gender and ethnicity have been annotated following a semi-automatic process. There are 24K identities (4K for class). The average number of images per identity is 5.5 with a minimum number of 3 for a total number of images greater than 150K. Users are grouped according to their gender (male or female) and three categories related with ethnic physical characteristics.

We are aware about the limitations of grouping all human ethnic origins into only 3 categories. According to studies, there are more than 5K ethnic groups in the world. We categorized according to only three groups in order to maximize differences among classes.

A. Morales, J. Fierrez, R. Vera-Rodriguez, “SensitiveNets: Learning Agnostic Representations with Application to Face Recognition,” arXiv:1902.00334, 2019. [pdf]

BeCAPTCHA-Mouse

BeCAPTCHA-Mouse Benchmark for development of bot detection technologyes based on mouse dynamics. BeCAPTCHA-Mouse benchmark contains more than 10K synthetic mouse trayectories generated with two methods.

A Acien, A Morales, J Fierrez, R Vera-Rodriguez, “BeCAPTCHA-Mouse: Synthetic Mouse Trajectories and Improved Bot Detection,” arXiv:2005.00890, 2020. [pdf]

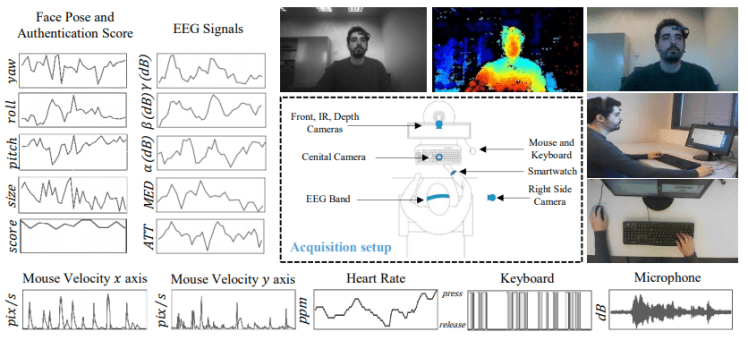

edBB: Biometrics and Behavior for Assessing Remote Education

We present a platform for student monitoring in remote education consisting of a collection of sensors and software that capture biometric and behavioral data. We define a collection of tasks to acquire behavioral data that can be useful for facing the existing challenges in automatic student monitoring during remote evaluation. Additionally, we release an initial database including data from 20 different users completing these tasks with a set of basic sensors: webcam, microphone, mouse, and keyboard; and also from more advanced sensors: NIR camera, smartwatch, additional RGB cameras, and an EEG band. Information from the computer (e.g. system logs, MAC, IP, or web browsing history) is also stored.

J Hernandez-Ortega, R Daza, A Morales, J Fierrez, J Ortega-Garcia, “edBB: Biometrics and Behavior for Assessing Remote Education,” Proc. of AAAI Workshop on Artificial Intelligence for Education (AI4EDU), New York, USA, 2020. [pdf]

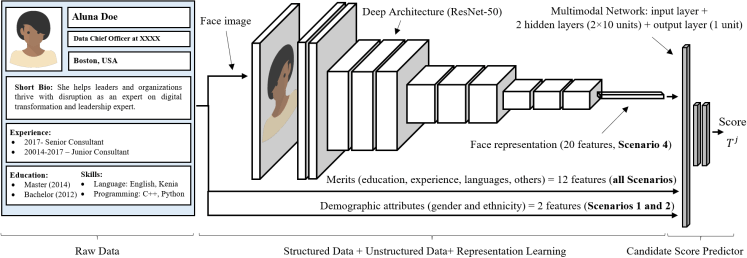

FairCVtes: Testbed for Fair Automatic Recruitment and Multimodal Bias Analysis

We present a new experimental framework aimed to study how multimodal machine learning is influenced by biases. The framework is designed as a fictitious automated recruitment system, which takes a feature vector with data obtained from a resume as input to predict a score within the interval [0, 1]. We have generated 24,000 synthetic resume profiles including 12 features obtained from 5 information blocks and 2 demographic attributes (gender and ethnicity), and a feature embedding with 20 features extracted from a face photograph. Each profile has been associated according to the gender and ethnicity attributes with an identity of the DiveFace database, from which we get the face photographs.

A. Peña, I. Serna, A. Morales, J. Fierrez, “Bias in Multimodal AI: Testbed for Fair Automatic Recruitment,” Proc. of IEEE CVPR Workshop on Fair, Data Efficient and Trusted Computer Vision, Washington, Seattle, USA, 2020. [pdf][Github]

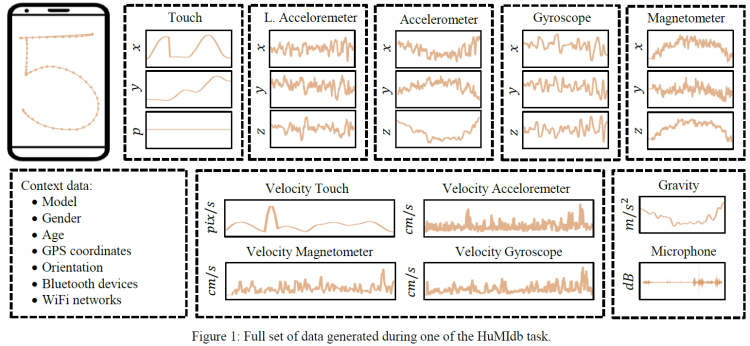

HuMIdb dataset: Human Mobile Interaction database

We present a new experimental framework aimed to study how multimodal machine learning is influenced by biases pHuMIdb dataset, a novel multimodal mobile database that comprises more than 5 GB from a wide range of mobile sensors acquired under unsupervised scenario. The dataset includes 14 sensors (see Table 1 for the details) during natural human-mobile interaction performed by more than 600 users. For the acquisition, we implemented an Android application that collects the sensor signals while the users complete 8 simple tasks with their own smartphones and without any supervision whatsoever (i.e., the users could be standing, sitting, walking, indoors, outdoors, at daytime or night, etc.). All data captured in this database have been stored in private servers and anonymized with previous participant consent according to the GDPR (General Data Protection Regulation).

A. Acien, A. Morales, J. Fierrez, R. Vera-Rodriguez, O. Delgado, “BeCAPTCHA: Bot Detection in Smartphone Interaction using Touchscreen Biometrics and Mobile Sensors,” arxiv, 2020. [pdf][Github]

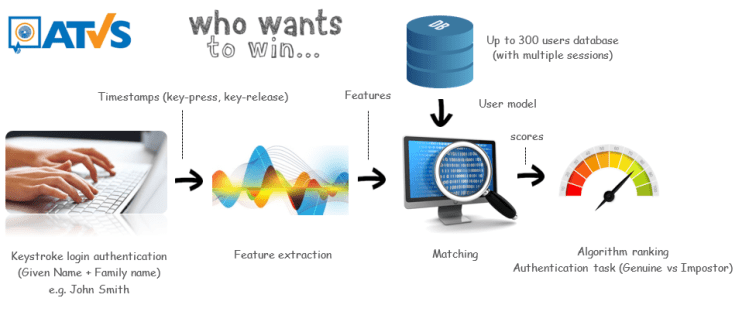

KBOC: Keystroke Biometrics Ongoing Competition

The KBOC competition is the first keystroke ongoing competition which overcome the limitations of traditional competitions based on a static snapshot of the state-of-the-art. In addition, the competition includes a public benchmark involving 3600 keystroke sequences from 300 users simulating a realistic scenario in which each user types his own sequence (given name and family name) and 3600 impostor attacks (users who try to spoof the identity of others).

A. Morales, J. Fierrez, R. Tolosana, J. Ortega-Garcia, J. Galbally, M. Gomez-Barrero, A. Anjos and S. Marcel, “Keystroke Biometrics Ongoing Competition“, IEEE Access, vol. 4, pp. 7736-7746, November 2016. [Link]

A. Morales, J. Fierrez, M. Gomez-Barrero, J. Ortega-Garcia, R. Daza, J.V. Monaco, J. Montalvão, J. Canuto, A. George, “KBOC: Keystroke Biometrics OnGoing Competition“, Proc. IEEE International Conference on Biometrics: Theory, Applications, and Systems, Buffalo, USA, 2016.

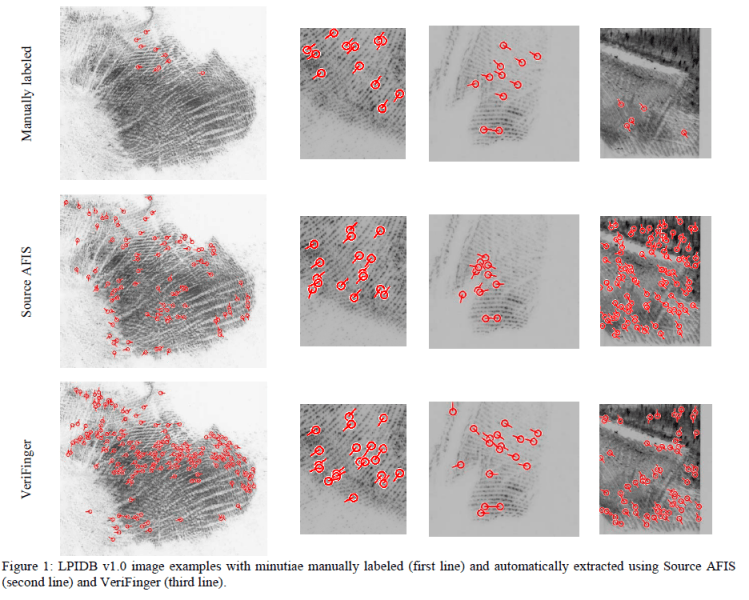

LPIDB: Latent Palmprint Identification Database

The latent palmprint identification database has been acquired under laboratory conditions. It is a unisession database and is composed of 380 latent palmprints from 100 different palms of 51 donors (28 male and 23 female). The age of the donors range between 4 and 81 years old with several professional employments represented (manual workers, office workers, students). Each donor contributed with two impressions (right and left hands) and multiple latent prints which simulate realistic scenarios under different poses. These poses are based on actions such as: opening a door, pushing a chair, grasping a knife, leaning on a table, carrying objects of different weights, among others. For more details see:

Aythami Morales, Miguel Angel Medina-Perez, Miguel A. Ferrer, Milton Garcia-Borroto, Leopoldo Altamirano Robles, “LPIDB v1.0 – Latent Palmprint Identification Database“, in Proc. of International Joint Conference on Biometrics, Florida, 2014.